Artificial Neural Networks: What They Are and How They Actually Work

- Stéphane Guy

- Feb 27

- 8 min read

We talk a lot about artificial intelligence, about ChatGPT, facial recognition, autonomous vehicles. But behind all these technologies hides a fascinating mechanism: artificial neural networks. Loosely inspired by the way our brain works, these systems are transforming how machines learn. What exactly are they? How do they work? And what are they actually used for?

In short

Artificial neural networks mimic the functioning of the human brain to enable machines to learn and make decisions.

They consist of layers of interconnected neurons that process information hierarchically.

Their history begins in 1943 with the work of Warren McCulloch and Walter Pitts — well before modern computers existed.

They excel at image recognition, language processing, and many other complex tasks.

Despite their performance, they raise ethical questions around transparency, bias, and accountability.

Their mainstream adoption dates from the 2010s, driven by increased computing power and the availability of massive datasets.

What Is an Artificial Neural Network?

An Architecture Inspired by the Brain

An artificial neural network is a computer system that draws, very schematically, on the way our brain works. In practice, it is "an organised set of interconnected neurons"*

These networks enable "the resolution of complex problems such as computer vision or natural language processing. In the human brain, biological neurons transmit electrical signals to process information. In the same way, artificial neurons receive data, process it through mathematical computations, then pass the result on to the next neurons. Each connection between neurons has a "weight" that determines how much influence one neuron has on another.*

*IBID

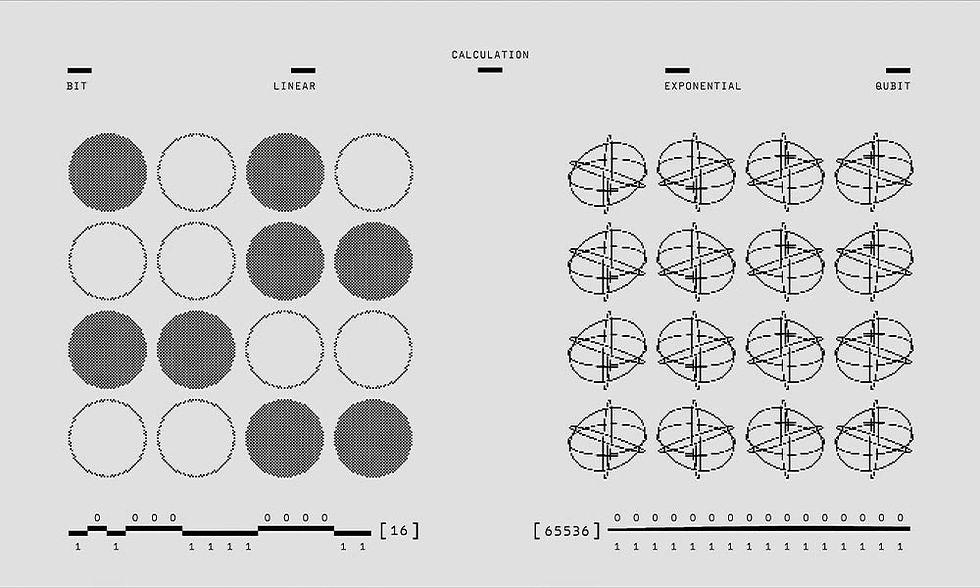

A Mathematical Model Above All

Don't be fooled by the poetic name. Behind "neural network" lies mathematics. Each neuron applies a mathematical function to its inputs, a weighted sum (multiplying each input by its corresponding weight) followed by an activation function that decides whether the neuron should "fire" or not.

What makes these networks so powerful is their ability to automatically adjust those weights during a learning process. Think of it as the system progressively refining its understanding of the problem, making many mistakes at first, then fewer and fewer.

The Layers: Input, Hidden, and Output

A typical neural network has three types of layers. The input layer receives raw data, the pixels of an image, for instance, if the goal is to recognise a cat. The hidden layers perform the actual processing, progressively extracting increasingly abstract features. The output layer produces the final result: in our example, the answer "yes, that's a cat" or "no, it isn't."

The more hidden layers a network has, the deeper it is. That is precisely what "deep learning" refers to, networks with many layers, sometimes hundreds of them.

Where Do Neural Networks Come From? A Brief History

1943: The Pioneers : McCulloch and Pitts

The story begins well before the digital era as we know it. In 1943, neurophysiologist Warren McCulloch and mathematician Walter Pitts published a landmark paper proposing what is considered the first mathematical model of an artificial neuron.* At the time, electronic computers didn't even exist yet.

1958: Rosenblatt's Perceptron

Fifteen years later, American psychologist Frank Rosenblatt went further by creating the Perceptron — considered the first true neural network capable of learning from experience, even when the trainer makes mistakes.*

* Ibid.

The American press went wild. The New York Times announced a machine capable of recognising anything, thinking, and even exploring space. The excitement was enormous.*

* Ibid.

1969: The Blow Dealt by Minsky and Papert

In 1969, mathematicians Marvin Minsky and Seymour Papert published a book called Perceptrons that exposed the theoretical limits of these systems. They demonstrated that a simple perceptron cannot solve nonlinear problems — events that don't follow proportional or straightforward logic, which, as it happens, make up the vast majority of real-world situations.*

* Ibid.

The book's impact was devastating. Research on neural networks collapsed, funding disappeared. This happened just a year before the 1970s — the beginning of the first "AI winter": a period when AI research, funding, and enthusiasm went into a prolonged slump.

The 1980s: The Renaissance

It took until the 1980s for neural networks to resurface. In 1986, David Rumelhart, Ronald J. Williams, and Geoffrey Hinton popularised the backpropagation algorithm — a method for efficiently training multi-layer networks and thinking through the development of a true neural network.*

* Ibid.

With the development of digital electronics and growing computing power, neural networks experienced a new surge. But one crucial element was still missing: data.

2012: The Deep Learning Turning Point

The real liftoff came in the early 2010s. "In 2012, during a competition organised by ImageNet, a neural network managed for the first time to surpass a human in image recognition."*

Why this sudden success? Two main reasons: the explosion of Big Data finally provided the massive quantities of data needed for training, and graphics processing units (GPUs) delivered the computing power required to process it. Since then, neural networks have never stopped advancing and have become the backbone of most modern artificial intelligence systems.

How Does a Neural Network Actually Work?

Learning: The Heart of the System

Unlike a conventional computer programme, a neural network is not programmed to solve a specific problem. It learns to solve it by observing examples.

A concrete case: you want to build a network that can tell dogs from cats. You show it thousands of already-labelled images ("dog" or "cat"). At first, the network will get it wrong constantly. But with each mistake, an algorithm slightly adjusts the weights of the connections between neurons to improve results.

It's an iterative process. After seeing enough examples and correcting enough errors, the network eventually "understands" what distinguishes a dog from a cat — the ears, the shape of the muzzle, the posture...

Backpropagation

The algorithm that drives this learning is called backpropagation. The principle: calculate the error made by the network on its prediction, then "propagate" that error backwards, from the output layer toward the earlier layers, adjusting the weights as it goes.*

It's a little like learning to throw darts: every missed throw tells you how to adjust your aim to get closer to the target.

Different Types of Networks

There are several neural network architectures, each suited to specific tasks.

Feedforward networks are the simplest: information flows in one direction only, from input to output.*

Convolutional Neural Networks (CNNs) excel at image processing. They apply filters that "scan" an image to detect local patterns such as edges or textures.*

Recurrent Neural Networks (RNNs) are designed for sequential data like text or speech. They have a form of "memory" that allows them to take context into account.*

What Are Neural Networks Actually Used For?

Image and Video Recognition

This is arguably the most spectacular application. Convolutional neural networks can recognise objects and faces, and even diagnose diseases from medical imagery.

On your smartphone, they're why your camera can automatically detect that you're shooting a sunset and adjust the settings accordingly.

In medicine, neural networks analyse X-rays and MRI scans to detect tumours or abnormalities with ever-increasing accuracy. AI is also used in space and Earth observation to detect anomalies or observe the stars.

Natural Language Processing

ChatGPT, Google Translate, voice assistants like Siri or Alexa — all are built on neural networks. These systems can understand human language, translate text, generate automatic summaries, or even write articles.

What's remarkable is that these networks weren't programmed with grammar rules or syntactic structures. They simply "read" billions of sentences and learned language patterns on their own.

Autonomous Driving

Tesla and other manufacturers use neural networks to let their vehicles "see" and understand their environment. Onboard cameras capture real-time images, and the network identifies other vehicles, pedestrians, road signs...*

It's a remarkable technical feat — but it also raises real questions: what happens when it goes wrong? Who is responsible?

Finance and Fraud Detection

In banking, neural networks analyse millions of transactions to detect suspicious behaviour that might indicate fraud. They can also predict market trends or assess the credit risk of a borrower.

Content Creation

Tools like DALL-E, Midjourney, and Stable Diffusion use neural networks to generate images from text descriptions. Suno AI, which we have already covered on 360°IA, can create complete pieces of music with or without lyrics. The possibilities seem endless. But this power also comes with significant challenges.

The Limits and Challenges of Neural Networks

The "Black Box" Problem

One of the biggest issues with deep neural networks is their opacity. You know what goes in (data) and what comes out (a prediction), but what happens in between remains largely mysterious.

Even the researchers who build these systems often struggle to explain why a network made one decision rather than another. This opacity is a serious problem in critical domains like medicine, justice, or finance, where every decision needs to be justifiable.

Imagine: an AI rejects your mortgage application. You ask why. The answer? "The algorithm decided so." Not exactly satisfying.

Algorithmic Bias

Neural networks learn from data. If that data contains biases, and most real-world datasets do, the network will reproduce and often amplify them.

For example, if you train a network to screen CVs using historical data where men were systematically favoured, the network will likely learn that male candidates are preferable.

This has already happened in practice. Amazon had to scrap an AI recruitment tool that discriminated against women, precisely because it had been trained on biased data.*

Resource Consumption

Training a deep neural network requires enormous computing power and consumes vast amounts of energy. Some recent language models have carbon footprints comparable to several transatlantic flights. It's an environmental issue we cannot ignore — and one we examine in our article on the environmental cost of AI.

The Risk of Overfitting

A network can become so specialised on its training examples that it loses the ability to generalise to new situations. This is called overfitting.*

Think of a student who memorises answers to past exam questions without understanding the underlying concepts: it works for familiar exercises, but not for new problems.

Towards More Ethical and Transparent AI?

Explainable AI (XAI)

In response to the black box problem, researchers are developing explainable AI (XAI) techniques that help us better understand how networks reach their decisions.*

Regulation

The European Union published the AI Act in 2024, a regulation that governs the use of artificial intelligence and requires a degree of explainability for high-risk systems.

These regulatory frameworks are essential for preventing abuses, but they must strike the right balance between protection and innovation.

Diversity Within Teams

To reduce bias, diversity within the teams that design AI systems is essential. When only a homogeneous group builds these systems, there will be many blind spots.

FAQ

Is a neural network really like a human brain?

No — it's a simplification. Artificial neural networks are very loosely inspired by how the brain works, but they are fundamentally mathematical models. The human brain is infinitely more complex and operates according to principles we don't yet fully understand.

Can a neural network learn on its own, without human involvement?

Yes and no. A network can automatically adjust its parameters during training, but it needs data prepared by humans, an architecture defined by humans, and objectives set by humans. Its autonomy is, in that sense, relative.

Why did it take so long for neural networks to go mainstream?

Mainly because of technological limitations. Even though the concepts existed since the 1940s, computers lacked the power needed to train complex networks, and data was scarce. It wasn't until the arrival of Big Data and GPUs in the 2010s that everything took off.

Will neural networks replace humans in certain jobs?

Some repetitive or pattern-recognition tasks are indeed at risk of being automated. But neural networks lack creativity, ethical judgment, and contextual understanding. They are better thought of as tools that augment human capabilities rather than replacements.

How can you tell whether a decision made by an AI is fair?

That is precisely the challenge at the heart of explainable AI and regulation. There is no perfect answer yet. Transparency, independent audits, and human oversight in critical decisions are important avenues for limiting the risks.

Can you trust a neural network?

It depends on the context. For sorting your holiday photos — absolutely. For deciding a medical diagnosis or a prison sentence? Far more caution is needed, along with human supervision and ethical safeguards. Trust should never be unconditional.

Comments